Object Space Algorithms

General Idea:

For each Object

For each Polygon

For each edge (v1,v2)

determine how many faces cover v1

{for each object, for each polygon}

Determine all edges that intersect (v1,v2):

For each object

For each edge

intersect 2D projection of (v1,v2) with edge

if (v1,v2) behind edge

record x,y,z intersection and +/- 1

sort intersections

keep running count (v1,v2): if count 0, visible

- Roberts - 1st Hidden Line Algorithm

- - Test each edge to see if it is obstructed by volume

of an object.

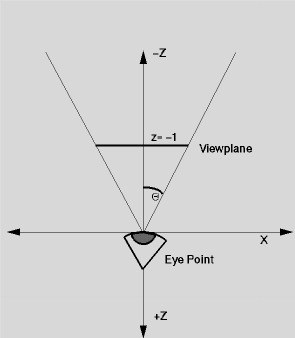

- - Write parametric equation for viewpoint to point on the edge.

- - P is inside a convex objectj if P is on the inside

of all planes that form j.

- - All objects must be convex.

- - O(n2).

- - Uses spatial coherence - tests edges against object

volumes.

- Appel (Loutrel, Galimberti similar) - Quantitative Invisibility -

Hidden Line

- -quantitative invisibility of a part =

- number of relevant

faces that lie between the point and the viewpoint.

- -need to compute quantitative invisibility of every point on

every edge.

- -edge coherence: quantitative invisibility of an edge only

changes when

- projection of that edge into the picture plane crosses

the projection of

- a contour edge. At this intersection, quantitative

invisibility changes by +1 or -1.

- -edges are divided into segments at these intersections.

- Weiler-Atherton - Area - HS or HL

- - Perform preliminary depth sort of polygons

- - Clip based on polygon nearest the eye-point

- creates inside list and outside list.

- - Removal of all polygons behind the nearest to the eye-point

- - Recursive subdivision, if required,

- - Final depth sort to remove any ambiguities.