Our agent is hungry and unhappy, and wants to go on a vacation, sunbathe, and eat well. Our agent starts out rich, but only has limited options, some of which will consume a substantial part of its wealth.

(a) (7 pts.) Describe only the Happy predicate using the situation

calculus. You should have one or more possibility axioms (one for each relevant

action) and one or more successor-state axioms (one for each relevant

action). These axioms should characterize how the state of the Happy predicate

changes as a result of the actions in the domain, in terms of the domain

predicates listed above.

(You may want to refer to pages 330-333 in the book.)

(b) (7 pts.) Describe the actions in this domain as STRIPS operators. Be sure to include all preconditions, add lists, and delete lists. (See pages 377-378.)

(c) (5 pts.) Show two different legal plans (sequences of actions) for achieving the goal described above from the given initial state.

(d) (6 pts.) How many legal plans are there for this goal? Explain your

answer. Does the answer change depending on whether or not repeated

states are allowed?

Now suppose that the agent decides to satisfy its Happiness goal using

the Sunbathe action. Insert this action into the plan, showing all dependencies.

Will this plan succeed? If so, complete the partial plan, resolving any

threats that arise. If not, complete the partial plan to the point where

planning fails, and explain the source of the failure.

| Outlook | Temp (F) | Humidity (%) | Windy? | Class |

| sunny | 75 | 70 | true | Play |

| sunny | 80 | 90 | true | Don't Play |

| sunny | 85 | 85 | false | Don't Play |

| sunny | 72 | 95 | false | Don't Play |

| sunny | 69 | 70 | false | Play |

| overcast | 72 | 90 | true | Play |

| overcast | 83 | 78 | false | Play |

| overcast | 64 | 65 | true | Play |

| overcast | 81 | 75 | false | Play |

| rain | 71 | 80 | true | Don't Play |

| rain | 65 | 70 | true | Don't Play |

| rain | 75 | 80 | false | Play |

| rain | 68 | 80 | false | Play |

| rain | 70 | 96 | false | Play |

(a) (10 pts.) At the root node for a decision tree in this domain, what are the information gains associated with the Outlook and Humidity attributes? (Use a threshold of 75 for humidity (i.e., assume a binary split: humidity <= 75 / humidity > 75.)

(b) (10 pts.) Again at the root node, what are the gain ratios associated with the Outlook and Humidity attributes (using the same threshold as in (a))?

(c) (5 pts.) Suppose you build a decision tree that splits on the Outlook

attribute at the root node. How many children nodes are there are at the

first level of the decision tree? Which branches require a further split

in order to create leaf nodes with instances belonging to a single class?

For each of these branches, which attribute can you split on to complete

the decision tree building process at the next level (i.e., so that at level

2, there are only leaf nodes)? Draw the resulting decision tree, showing

the decisions (class predictions) at the leaves.

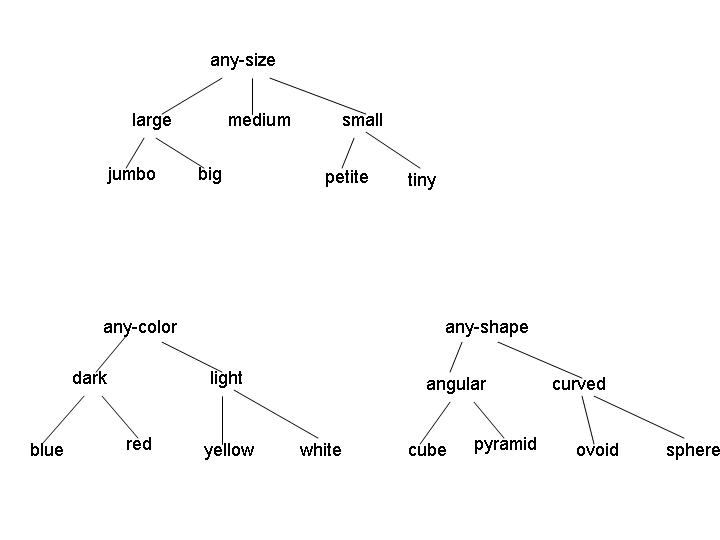

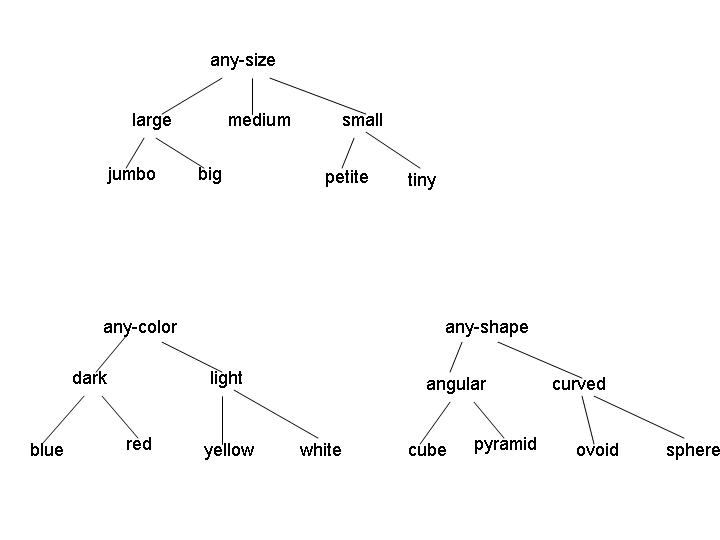

Examples of legal concepts in this domain include [medium light angular], [large red sphere], and [any-size any-color any-shape]. No disjunctive concepts are allowed, other than the implicit disjunctions represented by the internal nodes in the attribute hierarchies. (For example, the concept [large [red v yellow] curved] isn't allowed.)

(a) (5 points) Consider the initial version space for learning in this domain. What is the G set? How many elements are in the S set? Give one representative member of the initial S set.

(b) (5 points) Suppose the first instance I1 is a positive example, with attribute values [jumbo yellow ovoid]. After processing this instance, what are the G and S sets?

(c) (5 points) Now suppose the learning algorithm receives instance I2, a negative example, with attribute values [jumbo red pyramid]. What are the G and S sets after processing this example?

(d) (10 points - 2.5 points each) For this question, you should start with the version space that remains after I1 and I2 are processed. For each of the following combinations of instance type and events, give a single instance of the specified type that would cause the indicated event, if such an instance exists. If no such instance exists, explain why.

Bonus: (e) (5 points) If learning ends after I1 and I2 are processed,

how many concepts remain in the version space?