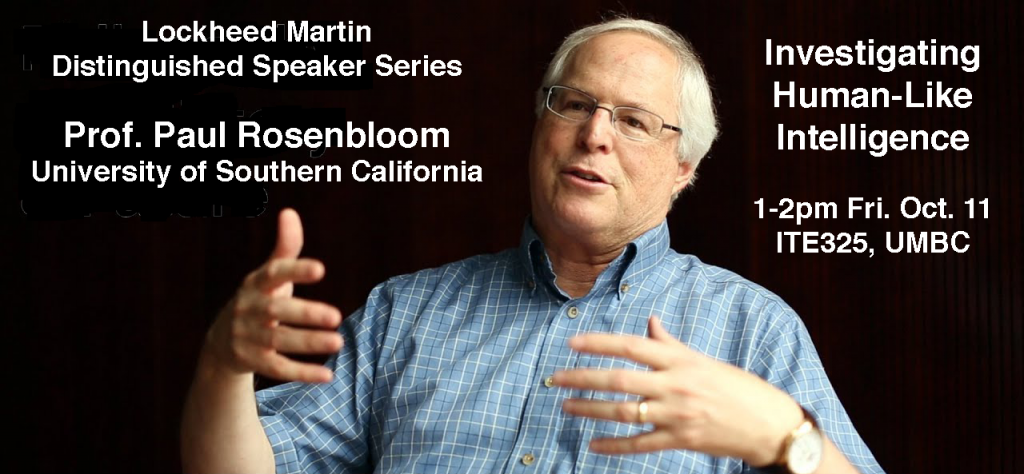

Lockheed Martin Distinguished Speaker Series

Localization of Brain Activations Based on

EEG Recordings and Sparse Signal Recovery Theory

Professor Athina Petropulu

Electrical and Computer Engineering Rutgers University

1:00-2:00pm Friday, 1 November 2019

Room 104, ITE Building, UMBC

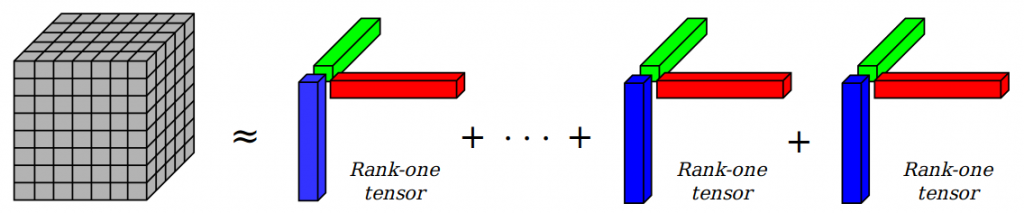

Sparse signal recovery is often formulated as an l1-norm minimization problem. However, unless certain conditions are satisfied, there is no guarantee that the least l1-norm solution will also be a sparsest solution. In this talk, we show that by appropriately weighting the sensing matrix, we can formulate an l1-norm minimization problem whose solution is guaranteed to be one of the sparsest solutions. The weights can be obtained based on a low resolution estimate of the sparse signal, obtained for example via a method that does not encourage sparsity.

The proposed weighting approach is a good candidate for Electroencephalography (EEG) sparse source localization, where measurements of sensors, placed on a subject’s head are used to localize activations inside the brain. In many cases, the locations of these activations are related to the subject’s reactions or intensions, and estimating them via a non-invasive and inexpensive modality like EEG can find applications in several domains, including cognitive and clinical neuroscience as well as brain-computer interfaces (BCIs). In response to simple tasks, the brain activations are sparse, and thus, their localization based on the EEG recordings can be formulated as a sparse signal recovery problem. In this case, the corresponding basis matrix, referred to as lead field matrix, has high mutual coherence, which means that the least l1-norm solution will not necessarily lead to the brain sources. In spite of the high coherence of the lead field matrix, the proposed weighting approach can still estimate the sources inside the brain. In this talk, this is demonstrated by localizing active sources in the brain corresponding to an auditory task from EEG recordings of human subjects.

Athina P. Petropulu received her undergraduate degree from the National Technical University of Athens, Greece, and the M.Sc. and Ph.D. degrees from Northeastern University, Boston MA, all in Electrical and Computer Engineering. She is Distinguished Professor at the Electrical and Computer Engineering (ECE) Department at Rutgers, having served as chair of the department during 2010-2016. Before joining Rutgers in 2010, she was faculty at Drexel University. She held Visiting Scholar appointments at SUPELEC, Universite’ Paris Sud, Princeton University and University of Southern California. Dr. Petropulu’s research interests span the area of statistical signal processing, wireless communications, signal processing in networking, physical layer security, and radar signal processing. Her research has been funded by various government industry sponsors including the National Science Foundation (NSF), the Office of Naval research, the US Army, the National Institute of Health, the Whitaker Foundation, Lockheed Martin and Raytheon.