Prof. Tülay Adali receives prestigious Humboldt Research Award for advanced data analysis

UMBC’s Tülay Adali, professor of computer science and electrical engineering (CSEE) and distinguished university professor, has received the prestigious Humboldt Research Award. The Alexander von Humboldt Foundation describes the award as presented to scholars “whose fundamental discoveries, new theories, or insights have had a significant impact on their own discipline and who are expected to continue producing cutting-edge achievements in the future.”

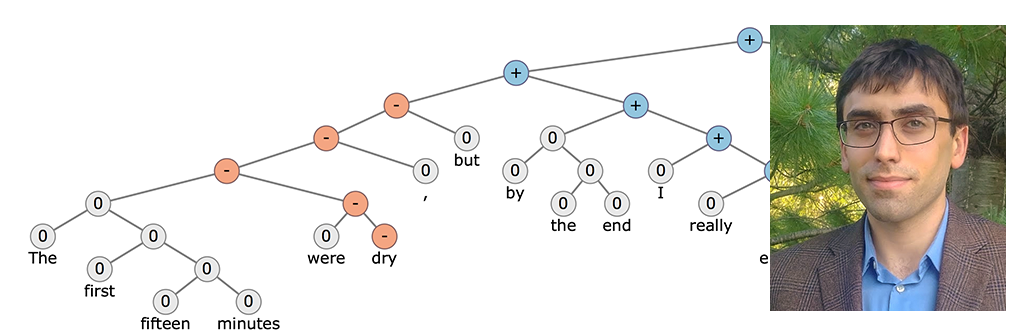

Adali is the director of UMBC’s Machine Learning for Signal Processing Lab. Her research focuses on developing flexible methods for data fusion. These innovative methods enable researchers to extract powerful features from multi-modal data by letting them fully interact with and inform each other.

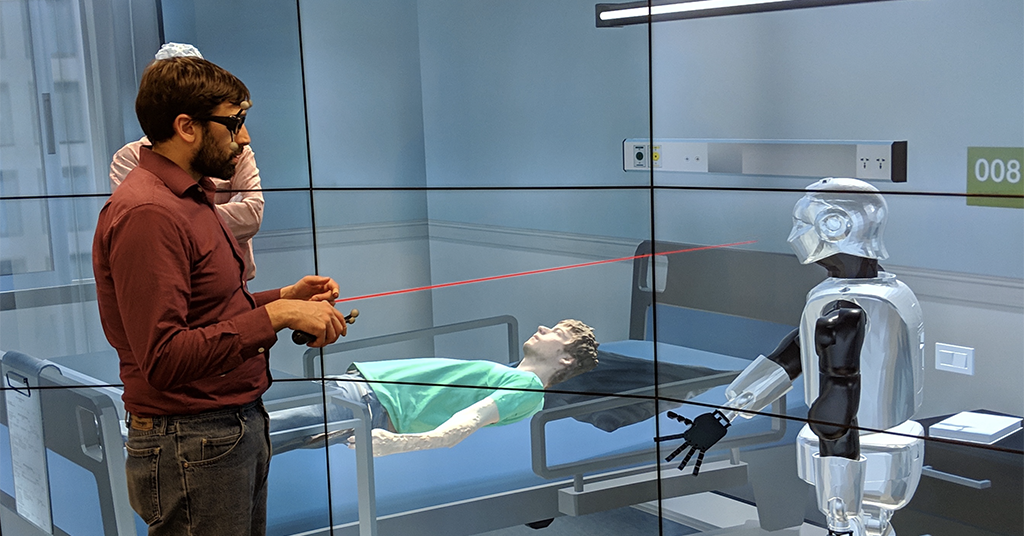

A main application area of her work has been medical image analysis, where these features are used in diagnosis as well as treatment planning and evaluation. Adali and her research collaborators are also exploring applications of these methods in remote sensing, misinformation detection, and gesture and video analysis.

A years-long research collaboration

Humboldt Award recipients spend up to one year conducting collaborative research at institutions in Germany. Adali plans to continue to work with her longtime collaborator Peter Schreier, who is based in Paderborn University. Through a research connection that has spanned many years, Adali says that her lab and Schreier’s continue to have wonderful synergy.

Together, Adali and Schreier have worked to address problems such as data-driven discovery of relationships in multi-modal data, and in particular, when the sample sizes are small. “This is a key practical problem in many applications, especially in the medical domain,” Adali shares. She notes that this provides an important starting point for their current work.

“Things are moving along, even though I could not travel this summer, as we started having weekly research meetings between our groups,” Adali says. “This is a valuable experience for my students. In the past, we had hosted Schreier and his students here at UMBC, some of my students had met Schreier and his students at conferences before, and these initial physical connections matter. I am hoping we will all be able to travel again, soon.”

Receiving the award

As a Humboldt Award recipient, Adali was invited to attend a gathering in June with her fellow awardees, hailing from universities around the world. Due to COVID-19, the event was moved online. Awardees had an opportunity to meet the German president virtually as part of the event.

While she wishes the event could have been held in person, Adali says that it gave her an exciting opportunity to connect with other Humboldt awardees and learn more about scientist and explorer Alexander von Humboldt.

In 2015, Curtis Menyuk, professor of CSEE, received a Humboldt Award.

Adapted from a UMBC News article written by Megan Hanks. Banner image: Tülay Adali, fourth from left, with the members of her lab. Photo courtesy of Adali.